Multilingual Script & Encoded String Audit т wfwf259, XxvidУЉo, ЮМЮБЮЙЮЛЮБЮБЮДЮЕ, ЯЮЙЮНЮВЮБЮМЮК, ЯЮБЮМЯЮБ, аЗббаЛбаЕбаКб, баНаИбаКбаЗбаКаЕб, бббаМбаКаЕбб, рЈЊрЉАрЈрЈОрЈЌрЉXxx

A disciplined audit of multilingual scripts and encoded strings examines provenance, encoding states, and localization implications for items like wfwf259 and its variants. The analysis adopts a methodical, language-aware lens, classifying by script, language, and encoding while noting normalization challenges and potential homoglyphs. It records data lineage and practical pitfalls, then outlines robust parsing strategies to avoid semantic drift. The goal is reproducible rigor that prompts further scrutiny as complexities accumulate, inviting a closer look at how these elements intersect in diverse textual ecosystems.

What Multilingual Script Audits Reveal About Encoding

Multilingual script audits reveal how encoding choices reflect linguistic diversity and practical constraints across writing systems. The analysis traces script provenance, identifying historical influences, script adoption, and interoperability needs. It highlights encoding ambiguity, where similar glyphs map differently across platforms, creating risk of misinterpretation. Methodical examination underlines design decisions, data lineage, and the freedom to adapt encoding schemes for inclusive communication.

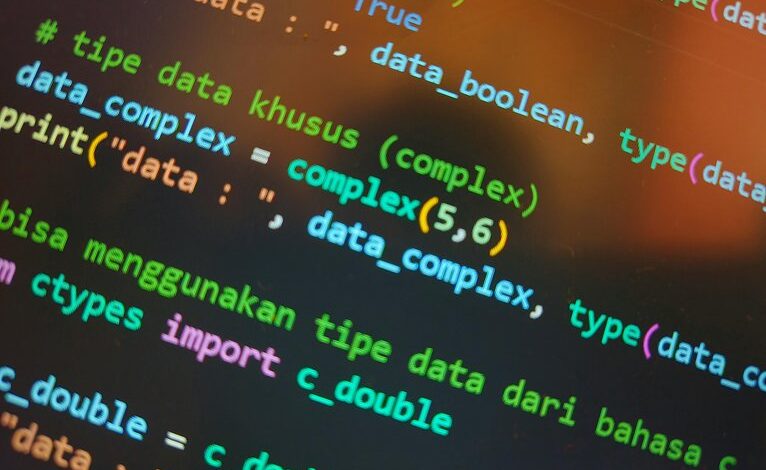

How to Classify Strings by Script, Language, and Encoding

Classifying strings by script, language, and encoding requires a structured, cross-disciplinary approach that dissects each string into its constituent elements before synthesis. The methodical process emphasizes script classification, contextual language cues, and encoding detection, revealing patterns across alphabets and diacritics. Clear criteria guide categorization, enabling reproducible audits, multilingual insight, and freedom-driven evaluation of textual diversity without duplicative or speculative assertions.

Practical Pitfalls and Security Considerations in Multilingual Text

Given the cross-cultural text landscape, practical pitfalls arise from scriptцЗЗчЈ, homoglyphs, and encoding ambiguities that can subtly distort meaning or mislead automated checks; a disciplined audit must anticipate such issues before deployment.

This analysis identifies localization risks and encoding pitfalls, emphasizing rigorous validation across scripts, fonts, and normalization states to prevent semantic drift, leakage, or misclassification in multilingual content workflows.

Best Practices for Robust Parsing and Normalization

What constitutes reliable parsing and normalization in multilingual contexts hinges on disciplined, repeatable procedures that anticipate script variety, encoding states, and font-rendering quirks.

The discussion outlines best practices for robust parsing, encoding, and normalization, emphasizing deterministic pipelines, canonical forms, and proactive error handling.

Multilingual fidelity depends on consistent normalization, systematic validation, and transparent documentation to support auditable, freedom-friendly software that respects diverse scripts.

Conclusion

In a meticulously detached cadence, the audit reveals that multilingual strings, though garbed in diverse scripts, stubbornly converge on one truth: misencoding invites chaos, yet careful normalization can placate it. Irony abounds as we applaud complexity while insisting on determinism. The methodical reader will savor the precision of script classification, lineage tracing, and robust parsingтknowing, finally, that reproducible verification thrives precisely because the anomalies are predictable, not mysterious. Multilingual rigor proves delightfully essential, not optional.