Record Consistency Analysis Batch – Puritqnas, Rasnkada, reginab1101, Site #Theamericansecrets

The record consistency batch process applied by Puritqnas, Rasnkada, reginab1101, and Site #Theamericansecrets follows a structured protocol to verify each entry against predefined criteria. The approach is methodical, emphasizing traceable results, documented provenance, and clear anomaly handling. It notes delays, flags policy drift, and quarantines suspicious records for reprocessing. While this yields a disciplined view of reliability, questions remain about threshold choices and downstream implications for analytics, inviting careful scrutiny as the process proceeds.

What Is Record Consistency Analysis Batch?

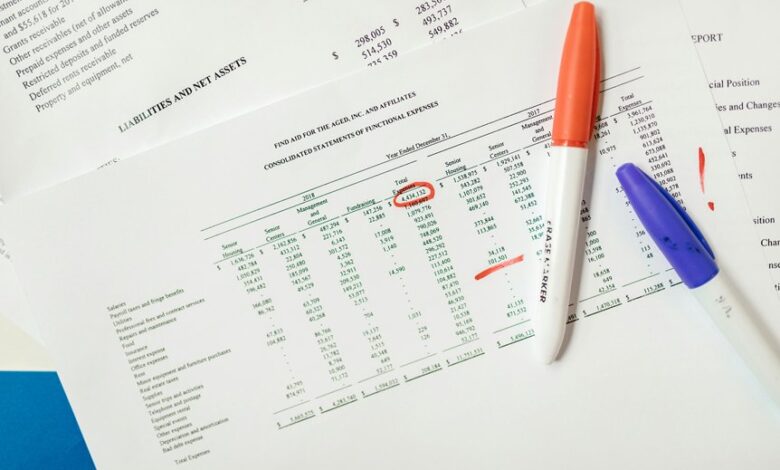

Record Consistency Analysis Batch refers to a structured procedure designed to verify that data entries or records align with predefined consistency criteria across a batch of items. The approach emphasizes objective evaluation, reproducible checks, and traceable results. It remains skeptical of anomalies, demanding meticulous documentation. Key concerns include record consistency and batch checks, ensuring alignment, integrity, and auditable evidence within the entire dataset.

How Puritqnas, Rasnkada, reginab1101, Site Theamericansecrets Perform Batch Checks

Puritqnas, Rasnkada, reginab1101, and Site Theamericansecrets implement batch checks by applying standardized verification steps to every item in the dataset, ensuring that each record adheres to predefined rules and consistency criteria.

The process emphasizes Purity checks and Latency analysis, scrutinizing anomalies, quantifying delays, and maintaining disciplined rigor while preserving an accessible, freedom-oriented data governance stance.

What Batch-Level Checks Reveal About Data Reliability

How do batch-level checks illuminate data reliability in practice? Systematic evaluation uncovers inconsistencies across records, revealing hidden biases and sampling gaps. The analysis emphasizes data quality through traceable indicators, anomaly frequency, and alignment with source metadata. Batch auditing provides a disciplined view of error patterns, supporting skeptical, rigorous verification without overclaiming accuracy. Findings guide cautious interpretation rather than premature conclusions.

Practical Remediation Steps and Downstream Impact on Analytics

Is remediation the true test of batch integrity, and if so, what concrete steps reliably restore trust without introducing new biases? Practitioners delineate steps: audit data provenance, quarantine suspicious records, implement exception handling, reprocess with validated pipelines, and document policy drift triggers. Downstream analytics observe stability metrics, while data gaps are closed transparently; outcomes remain skeptical, traceable, and auditable to preserve analytical freedom.

Conclusion

In the closing minutes, the batch’s quiet metrics tighten like a vise, each flag and timestamp whispering of drift and delay. The auditors methodically trace provenance, quarantine anomalies, and rerun pipelines, all while maintaining a skeptical stance toward initial findings. As results cohere, a cautious confidence emerges—yet the last line of the log hints at unseen inconsistencies lurking in the margins. The analytical tension remains: reliability verified, or merely reframed for the next audit.